National Action Council for Minorities in Engineering(NACME) Google Applied Machine Learning Intensive (AMLI) at the University of Kentucky

Developed by:

- Javi Cardenas -

University of Illinois at Urbana-Champaign - Jalen Collins -

University of Kentucky - Patricia Garcia -

University of Southern California

Mentor:

- Tasmia Tarin -

University of Kentucky

depend on, and they will be installed using:

pip install -r vist_requirements.txt

- Fork this repo

- Change directories into your project

- On the command line, type

pip3 install requirements.txt - ....

Pickle images that you are using, using Resnet50FeatureExtraction.ipynb and place them in a folder as your pickled image directory.

Make sure that you have downloaded the dictionaries folder to have the keys used in the main code.

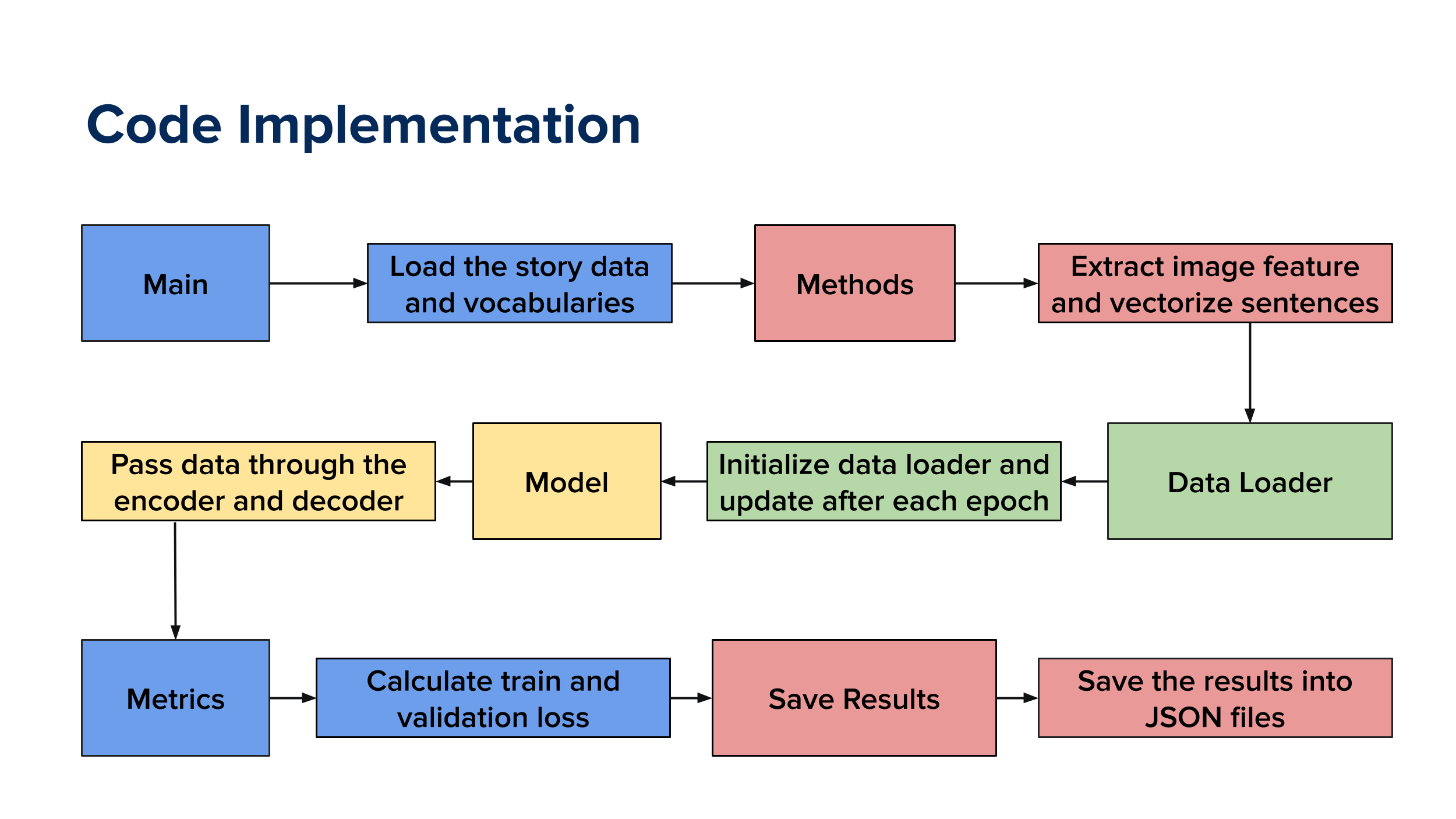

From there, you should have everything to run the model. Below you'll see the architechture that the model follows.