This work implements “Predicting Spatio-Temporal Entropic Differences for Robust No Reference Video Quality Assessment” in keras/tensorflow. If you are using the codes, cite the following article:

S. Mitra, R. Soundararajan, and S. S. Channappayya, “Predicting Spatio-Temporal Entropic Differences for Robust No Reference Video Quality Assessment,” IEEE Signal Processing Letters, vol. 28, pp. 170–174, 2021.

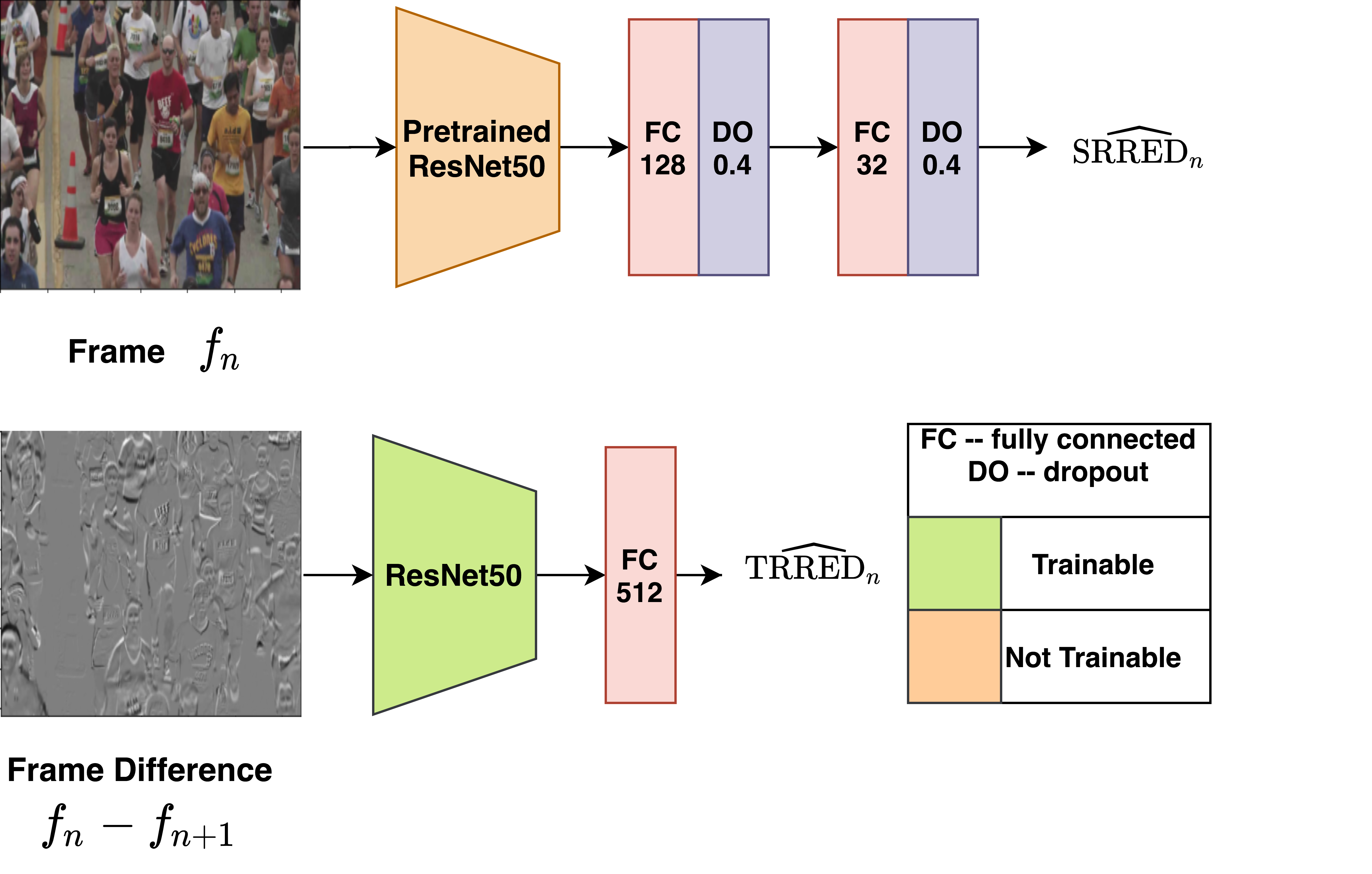

We use the function feature_generate_resnet50.py to produce spatially aware quality feature using pre-trained ResNet50 keras weights.

Use the file vid2frame&framediff.py to generate frame differences from videos which will be used as input to temporal learning model.

Use the allvs1_spatial_rred.py file to train and predict our spatial RRED model on any number of datasets. The following code requires ResNet50 feature for the video frames as input.

allvs1_temporal_rred.py train the temporal learning model from scrath for given training databases.

Overall NR-STED index of videos are predicted using test_st_rredmap_framelvl.py. Where trained temporal_rred model and spatial_rred prediction are taken as input.