Conversation

|

Unrelated: it's interesting how @bigbike and @eundersander have almost the same avatar, what's the probability of that happening? 😄 |

| sensorRenderTarget->blitRgbaToDefault(); | ||

| // Immediately bind the main buffer back so that the "imgui" below can work | ||

| // properly | ||

| Mn::GL::defaultFramebuffer.bind(); |

There was a problem hiding this comment.

Why is this removed? What's the reason?

There was a problem hiding this comment.

Good catch. I am supposed to move it out of the bracket. Definitely a bug introduced. Will fix.

| default: | ||

| // Have to had this default, otherwise the clang-tidy will be pissed off, | ||

| // and will not let me pass | ||

| break; |

There was a problem hiding this comment.

😆

what was the error it gave you? this seems silly

There was a problem hiding this comment.

If it's really that bad, you can always use a magic NoLint comment.

There was a problem hiding this comment.

The CI test failed directly in the clang-tidy section.

It says missing code for the other enum terms.

And look at this long list:

None = 0,

Color = 1,

Depth = 2,

Normal = 3,

Semantic = 4,

Path = 5,

...

There was a problem hiding this comment.

@Skylion007 : the "default" is not a bad concept.

There was a problem hiding this comment.

Oh, right, I didn't realize there's a lot more values. In that case either enumerating them all or adding a default. In this case the default probably makes sense.

| // depth buffer is 24-bit integer pixel | ||

| depthBuffer_.setStorage(Mn::GL::RenderbufferFormat::DepthComponent24, | ||

| framebufferSize); | ||

| frameBuffer_.attachRenderbuffer( | ||

| Mn::GL::Framebuffer::BufferAttachment::Depth, depthBuffer_); |

There was a problem hiding this comment.

Do you actually need the depth buffer? Isn't it only rendering a single textured triangle, no matter what kind of data gets visualized?

There was a problem hiding this comment.

I need a depth buffer later if I would like to "interact“. For example, if I would like to select and highlight some objects. ( I need to draw these objects on top of this framebuffer.)

| void SensorInfoVisualizer::blitRgbaToDefault() { | ||

| frameBuffer_.mapForRead(Mn::GL::Framebuffer::ColorAttachment{0}); | ||

| CORRADE_ASSERT( | ||

| frameBuffer_.viewport() == Mn::GL::defaultFramebuffer.viewport(), | ||

| "SensorInfoVisualizer::blitRgbaToDefault(): a mismatch on viewport" | ||

| "between current framebuffer and the default framebuffer.", ); | ||

|

|

||

| Mn::GL::AbstractFramebuffer::blit( | ||

| frameBuffer_, Mn::GL::defaultFramebuffer, frameBuffer_.viewport(), | ||

| Mn::GL::defaultFramebuffer.viewport(), Mn::GL::FramebufferBlit::Color, | ||

| Mn::GL::FramebufferBlitFilter::Nearest); | ||

| } |

There was a problem hiding this comment.

Actually, is the extra framebuffer needed at all? Since the only use of this visualizer is in the viewer, where you do want to have it in the default framebuffer anyway.

I'd personally just have draw() take the target framebuffer it renders to and that's it. I don't see a reason why there would need to be yet another render target for visualization operations.

There was a problem hiding this comment.

See my comments below.

| setUniform(uniformLocation("depthTexture"), DepthTextureUnit); | ||

|

|

||

| depthUnprojectionUniform_ = uniformLocation("depthUnprojection"); | ||

| CORRADE_INTERNAL_ASSERT(depthUnprojectionUniform_ != ID_UNDEFINED); |

There was a problem hiding this comment.

I remember we discussed this once already, but I feel like it needs repeating -- ID_UNDEFINED is Habitat-specific, it has no relation to Magnum, and if it ever changes for some reason, the assert will no longer work.

If you want to avoid using the -1 because "coding guidelines forbid magic constants" etc, I'd suggest doing this instead:

CORRADE_INTERNAL_ASSERT(depthUnprojectionUniform_ >= 0);Same elsewhere.

There was a problem hiding this comment.

Will do.

I did not remember we discussed this before.

|

|

||

| #ifndef ESP_GFX_DEPTHVISUALIZERSHADER_H_ | ||

| #define ESP_GFX_DEPTHVISUALIZERSHADER_H_ | ||

| #include <Corrade/Containers/EnumSet.h> |

| @brief helper class to visualize undisplayble info such as depth, semantic ids | ||

| from the sensor on a framebuffer | ||

| */ | ||

| class SensorInfoVisualizer { |

There was a problem hiding this comment.

Why not just SensorVisualizer?

| // setup the shader | ||

| Mn::Resource<Mn::GL::AbstractShaderProgram, gfx::DepthVisualizerShader> | ||

| shader = visualizer.getShader<gfx::DepthVisualizerShader>( | ||

| gfx::SensorInfoType::Depth); | ||

| shader->bindDepthTexture(renderTarget().getDepthTexture()) | ||

| .setDepthUnprojection(*depthUnprojection()) | ||

| .setDepthScaling(depthScaling); | ||

| // draw to the framebuffer | ||

| visualizer.draw(shader.key()); |

There was a problem hiding this comment.

I don't understand the API design here. Why do you first retrieve a shader resource to set its uniforms but then pass just a resource key to draw() so draw() has to fetch the same shader resource again (and then do additional error handling)? Why not have just a draw(GL::AbstractShaderProgram&)? Or maybe even just expose the mesh and call a draw() on it directly from here.

Honestly the more I look at it, the less useful the overly generic SensorVisualizer seems to be. I'd make it actually aware of what it's doing instead of splitting the responsibilities partly between this class and the SensorVisualizer. That way:

- it can create the shader on-demand and set the uniforms on its own, exposing a simpler API to be used from VisualSensor

- thus you don't need to do the direct shader setup here (this is not a good place for such low-level stuff I'd say)

- and so you don't need the complex templated

getShader()getter and neither the resource manager nor any of that stuff, you can just have the shader(s) one by one directly in the class as the class is fully aware of what it can and cannot do

There was a problem hiding this comment.

Agreed, this should be more tightly integrated with Sensor. What's the benefit of this class? It really seems like we need a way to simplify shader setup so that it can be easily reused from VisualSensor.

Furthermore, doing it like that would make it easier to expose to Python for example. This feels like it would be a pain to expose to the Python viewer.

There was a problem hiding this comment.

To both of you: @mosra @Skylion007

Why I need this class?

I need a container to hold the framebuffer, renderbuffer, shaders, the big triangle (GL mesh) as a canvas etc. These resources which are necessary to do the visualization. I tried to put them in the VisualSensor. Then VisualSensor becomes too much and too ugly. Also I hope this infra can be used in the NonVisualSensor in the future.

Why I need the framebuffer and renderbuffer?

If it is only for "visualization", yes, the default framebuffer is good enough. But if I need "interaction", it is not enough. For example, I am thinking it would be awesome if we can "select and highlight" some objects even in the depth or semantic visualization.

(I know I need to further change the shader in this case.)

Now answer the questions separately:

it can create the shader on-demand

Constructing a shader is expensive. I prefer to keep it once it is constructed. So I need the ResourceManager either in this class or in the VisualSensor. I prefer to do it in this SensorVisualizer class so that later after we have developed the NonVisualSensor I can use it as well.

I don't understand the API design here.

maybe even just expose the mesh

Yes, when I coded it up, I wished to call shader->draw() directly. But I found I needed that mesh which was NOT exposed (and I do not want to). I was thinking retrieve this shader via key was cheap to do.

draw(GL::AbstractShaderProgram&)

OK, I can do it.

you can just have the shader(s) one by one directly in the class as the class is fully aware of what it can and cannot do

Putting all the shaders (there might be a lot in the future) one by one in which class, the VisualSensor class? It would become very ugly. I guess I do not understand you completely, do I? Hope you can explain a bit further.

Also I do not want to hold a copy of these shaders in every sensor. I need a container class to manage these shaders for me. So I have this SensorVisualizer class.

the complex templated getShader()

Not just for depth, semantic info, I am paving the way for the future, e.g., force, lidar, etc. Any info that is not directly displayable, but has certain ways to be visualized.

What's the benefit of this class?

See my above comments on why I need this class.

simplify shader setup so that it can be easily reused from VisualSensor.

I believe this is a clean way in order NOT to mess up with the VisualSensor.

doing it like that would make it easier to expose to Python for example

You will have to expose the entire SensorVisualizer class simply because it appears as 1 input parameter in the argument list of this function visualizeObservation in VisualSensor?

| highp float r = originalDepth / depthScaling; | ||

| highp float b = 0.5 - 0.5 * r; | ||

| fragmentColor = vec4(r, r, b, 1.0); |

There was a problem hiding this comment.

I'd suggest to use colormaps for better visualization output -- DebugTools::ColorMap. Especially the Turbo color map is tuned to make minor differences nicely visible and the output uniformly bright.

Further, to make it more generic, I'd name it a "color map transform" instead of "depth scaling", as that allows you to later use the same API for semantic ID visualization as well. See how MeshVisualizer does it.

There was a problem hiding this comment.

Cool stuff. I will study it.

There was a problem hiding this comment.

@mosra: By the way, I understand you can apply such ColorMap in magnum built-in MeshVisusalizer shader.

Question: how can I apply it to my customized shader??

| #ifdef DEPTH_VISUALIZATER | ||

| highp float | ||

| #endif |

There was a problem hiding this comment.

I feel these #ifdefs are a bit excessive. Declare the variable once above for both cases so you don't need to do this.

Thinking more about this, I feel like two completely unrelated shaders are mashed up together. They're not even exposed through the same C++ class. Since this is for an interactive visualization and not ML, one extra pass won't matter, so what about

- unprojecting depth as usual using the original shader

- then have a shader that's dedicated just to visualization, which then processes the unprojected depth

That way the VisualizerShader doesn't need the depth-related inputs and can be thus easily adapted to visualizing any other rendered data, such as semantic IDs.

There was a problem hiding this comment.

a shader that's dedicated just to visualization

I used to have a dedicated shader. But it is different from what you suggested here -- dedicated to process the unprojected depth. My shader will still do both depth unprojection + processing like it does here. And then I thought you might say, well why not leverage the existing depth.frag to avoid code duplication?? Clearly my guess took the wrong direction this time. :)

Well, I guess the focus is that do I need the depth-related input?

Answer: it depends whether I need "visualization ONLY" or "visualization + interaction".

Your comments is based on the former, while I was thinking the latter.

I am actually considering adding depth in later (directly read from the depthRenderTexture and output to the gl_FragDepth) since it gives me the ability for "interaction".

|

I feel like I already spent more time on the review here than it would take to implement a

That way you:

|

|

@mosra: This is diff is ready to be reviewed again. |

mosra

left a comment

mosra

left a comment

There was a problem hiding this comment.

Found just two very minor things.

I was wondering if it would be useful to allow the user to change the colormap used, but maybe do that only when someone really needs that. Turbo is a good default.

| #ifdef EXPLICIT_ATTRIB_LOCATION | ||

| layout(location = OUTPUT_ATTRIBUTE_LOCATION_COLOR) | ||

| #endif |

There was a problem hiding this comment.

I think this could do without the #ifdef EXPLICIT_ATTRIB_LOCATION around -- in Magnum that's only for compatibility with GL 2.1 / ES2 / WebGL 1, which you don't care about here.

(Also remove the related addSource() in the shader cpp, of course.)

Co-authored-by: Vladimír Vondruš <mosra@centrum.cz>

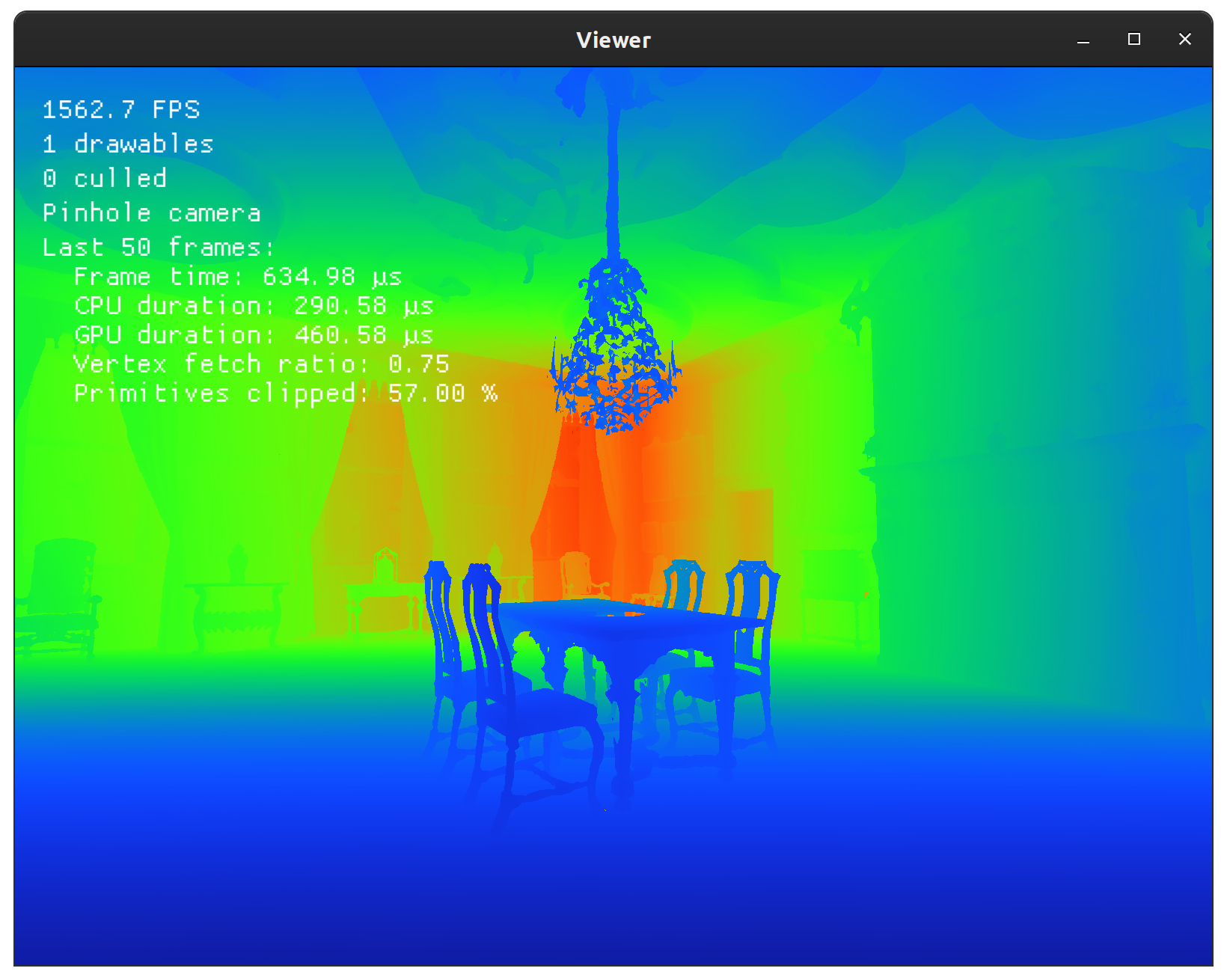

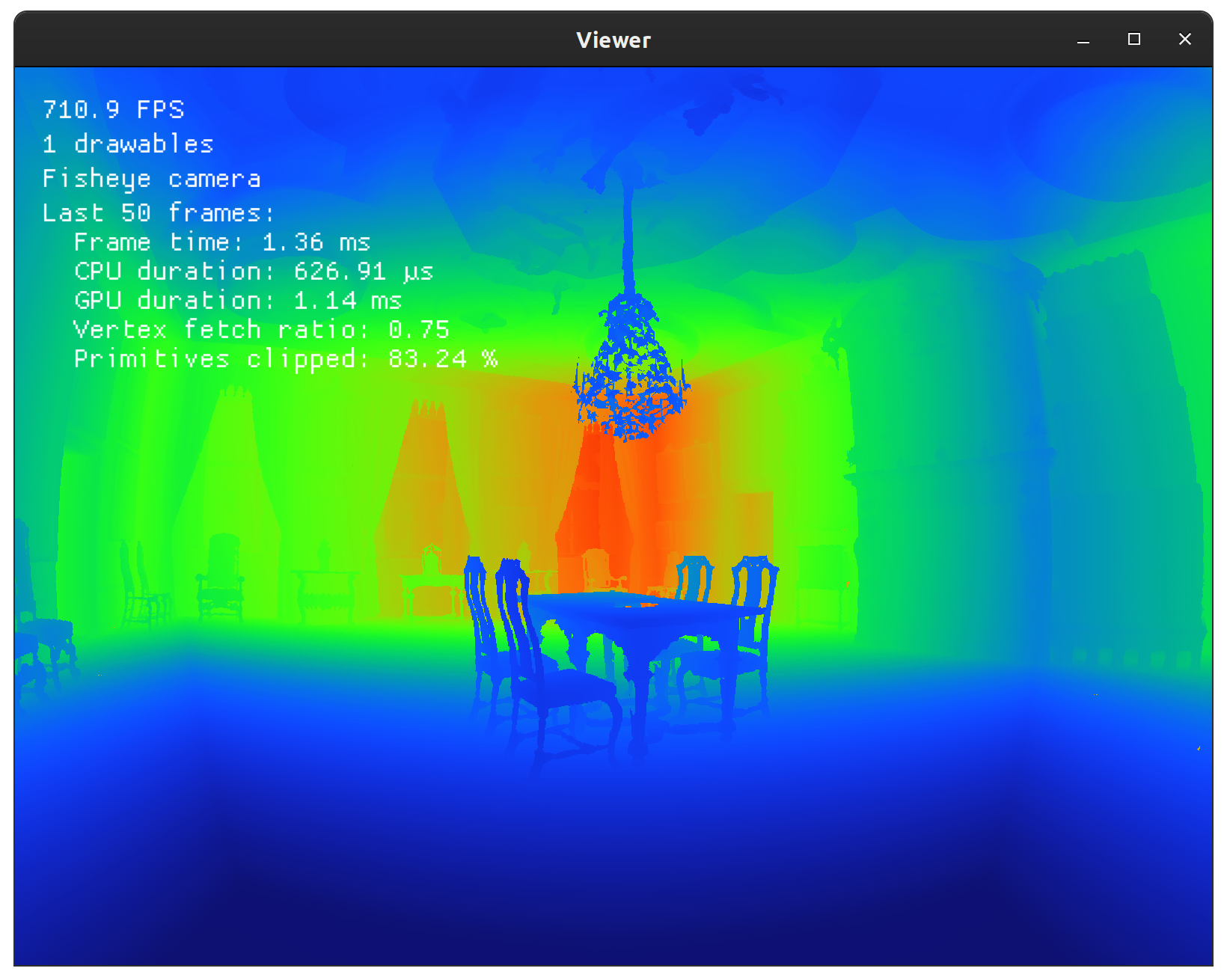

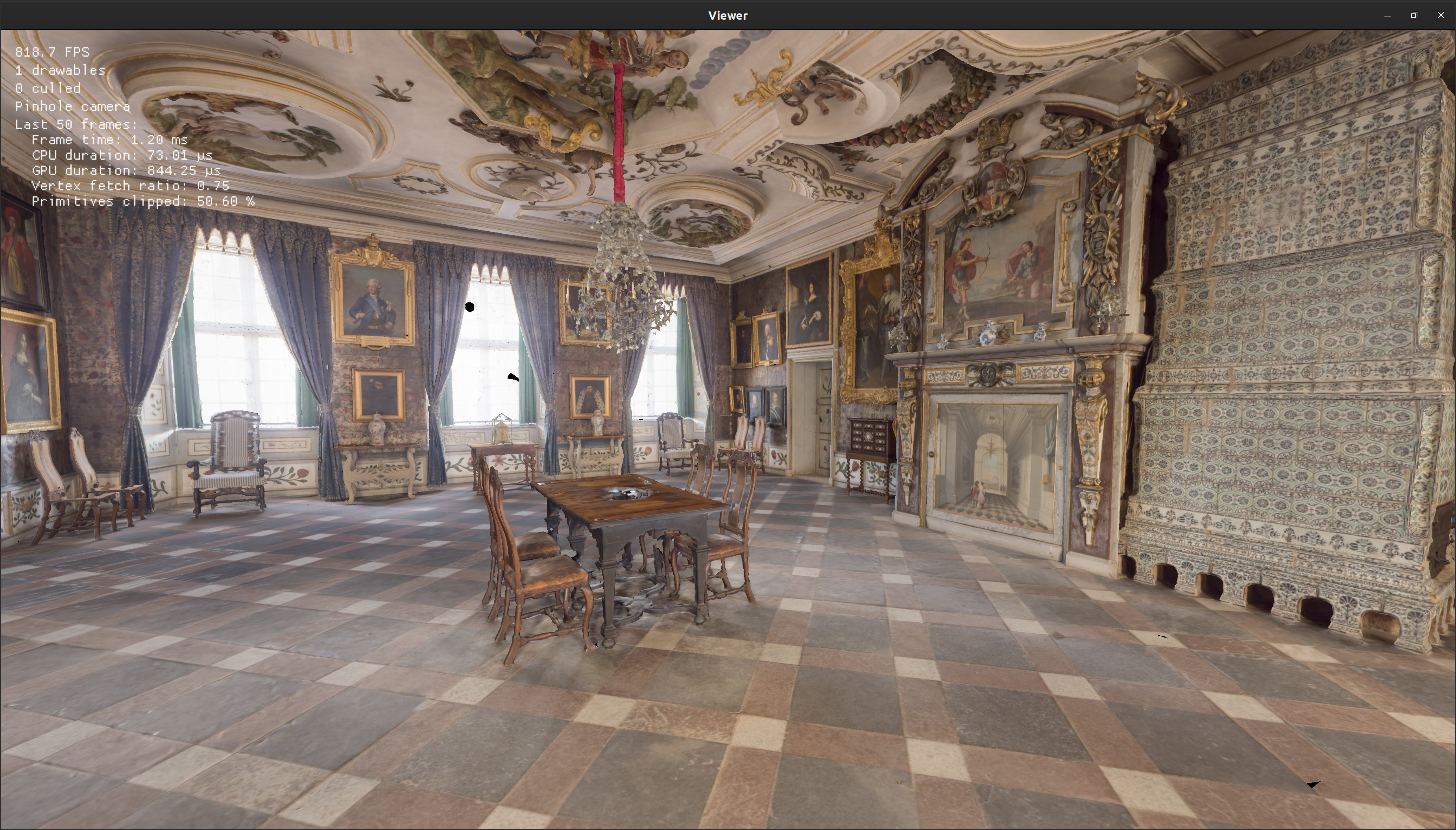

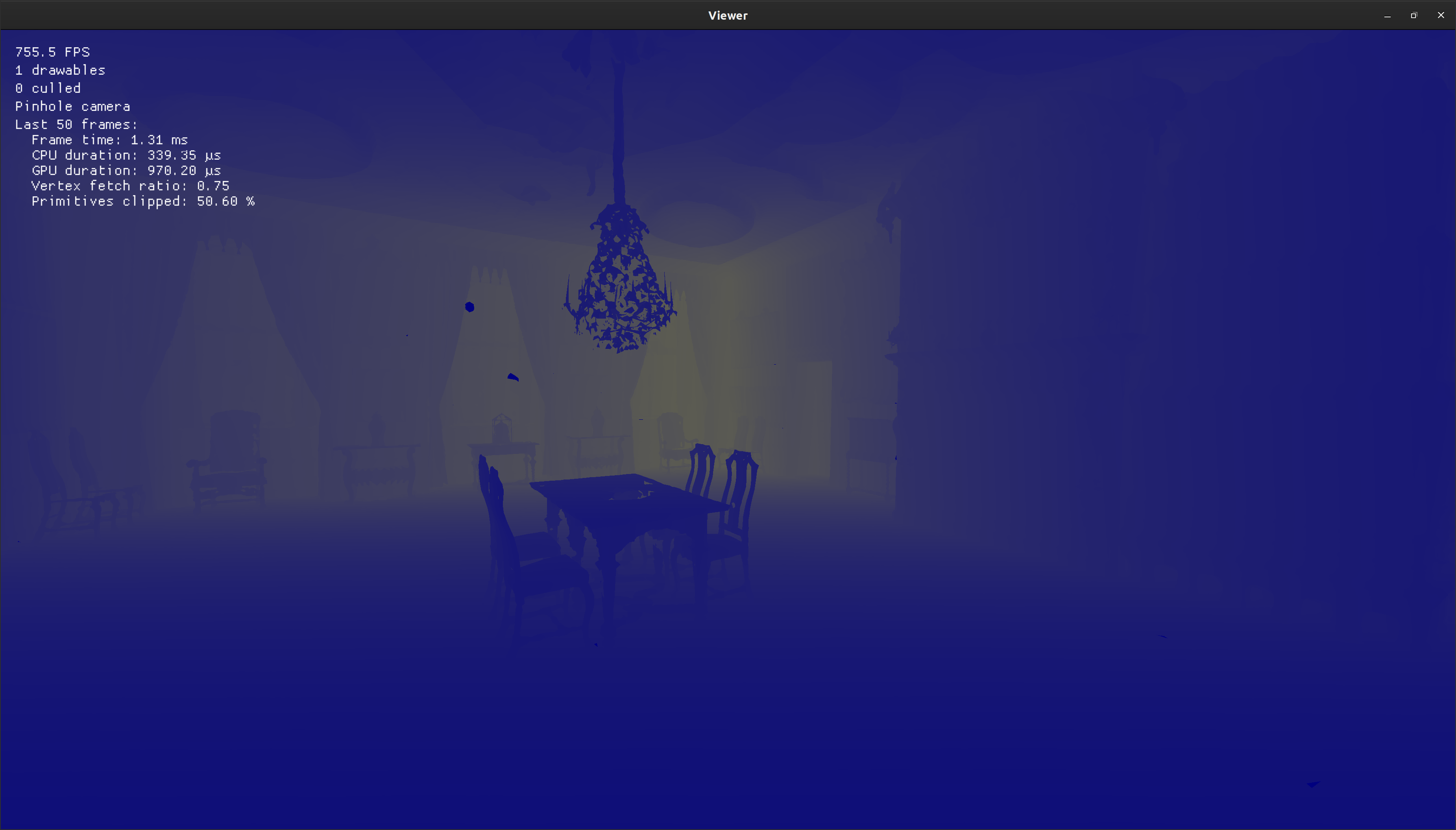

Motivation and Context

As titled. In current GUI mode (viewer), we do not have a way to visualize the depth info which is not displayable. This diff is to address this problem.

SensorInfoVisualizeras a helper class, which provides and manages the framebuffer, renderbuffer, shaders that are necessary in the visualization.DepthVisualizerShaderthat leverages the existingdepth.vertanddepth.fragto visualize the depth info.Lastest results:

rgb depth:

Fisheye depth:

==== OBSOLETE ========

Regular RGB sensor:

Depth sensor in the same camera angle:

How Has This Been Tested

Types of changes

Checklist